Links = Rank

Old Google (pre-Panda) was to some degree largely the following: links = rank.

Once you had enough links to a site you could literally pour content into a site like water and have the domain's aggregate link authority help anything on that site rank well quickly.

As much as PageRank was hyped & important, having a diverse range of linking domains and keyword-focused anchor text was important.

Brand = Rank

After Vince then Panda a site's brand awareness (or, rather, ranking signals that might best simulate it) were folded into the ability to rank well.

Panda considered factors beyond links & when it first rolled out it would clip anything on a particular domain or subdomain. Some sites like HubPages shifted their content into subdomains by users. And some aggressive spammers would rotate their entire site onto different subdomains repeatedly each time a Panda update happened. That allowed those sites to immediately recover from the first couple of Panda updates, but eventually, Google closed off that loophole.

Any signal which gets relied on eventually gets abused intentionally or unintentionally. And over time it leads to a "sameness" of the result set unless other signals are used:

Google is absolute garbage for searching anything related to a product. If I'm trying to learn something invariably I am required to search another source like Reddit through Google. For example, I became introduced to the concept of weighted blankets and was intrigued. So I Google "why use a weighted blanket" and "weighted blanket benefits". Just by virtue of the word "weighted blanket" being in the search I got pages and pages of nothing but ads trying to sell them, and zero meaningful discourse on why I would use one

Getting More Granular

Over time as Google got more refined with Panda broad-based sites outside of the news vertical often fell on tough times unless they were dedicated to some specific media format or had a lot of user engagement metrics like a strong social network site. That is a big part of why the New York Times sold About.com for less than they paid for it & after IAC bought it they broke it down into a variety of sites like Verywell (health), The Spruce (home decor), the Balance (personal finance), Lifewire (technology), Tripsavvy (travel) and ThoughtCo (education & self-improvement).

Penguin further clipped aggressive anchor text built on low-quality links. When the Penguin update rolled out Google also rolled out an on-page spam classifier to further obfuscate the update. And the Penguin update was sandwiched by Panda updates on either side, making it hard for people to reverse engineer any signal out of weekly winners and losers lists from services that aggregate massive amounts of keyword rank tracking data.

So much of the link graph has been decimated that Google reversed their stance on nofollow to were on March 1st of this year they started treating it as a hint versus a directive for ranking purposes. Many mainstream media websites were overusing nofollow or not citing sources at all, so this additional layer of obfuscation on Google's part will allow them to find more signal in that noise.

May 4, 2020, Algo Update

On May 4th Google rolled out another major core update.

Later today, we are releasing a broad core algorithm update, as we do several times per year. It is called the May 2020 Core Update. Our guidance about such updates remains as we’ve covered before. Please see this blog post for more about that:webmasters.googleblog.com/2019/08/core-u…

I saw some sites which had their rankings suppressed for years see a big jump. But many things changed at once.

Wedge Issues

On some political search queries which were primarily classified as being news related Google is trying to limit political blowback by showing official sites and data scraped from official sites instead of putting news front & center.

"Google’s pretty much made it explicit that they’re not going to propagate news sites when it comes to election related queries and you scroll and you get a giant election widget in your phone and it shows you all the different data on the primary results and then you go down, you find Wikipedia, you find other like historical references, and before you even get to a single news article, it’s pretty crazy how Google’s changed the way that the SERP is intended."

That change reflects the permanent change to the news media ecosystem brought on by the web.

The Internet commoditized the distribution of facts. The "news" media responded by pivoting wholesale into opinions and entertainment.

YMYL

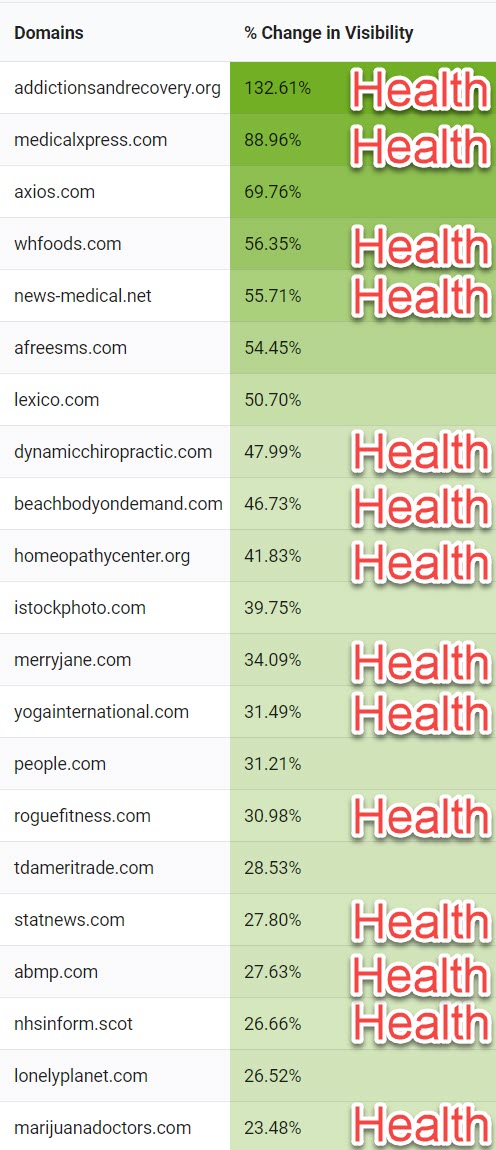

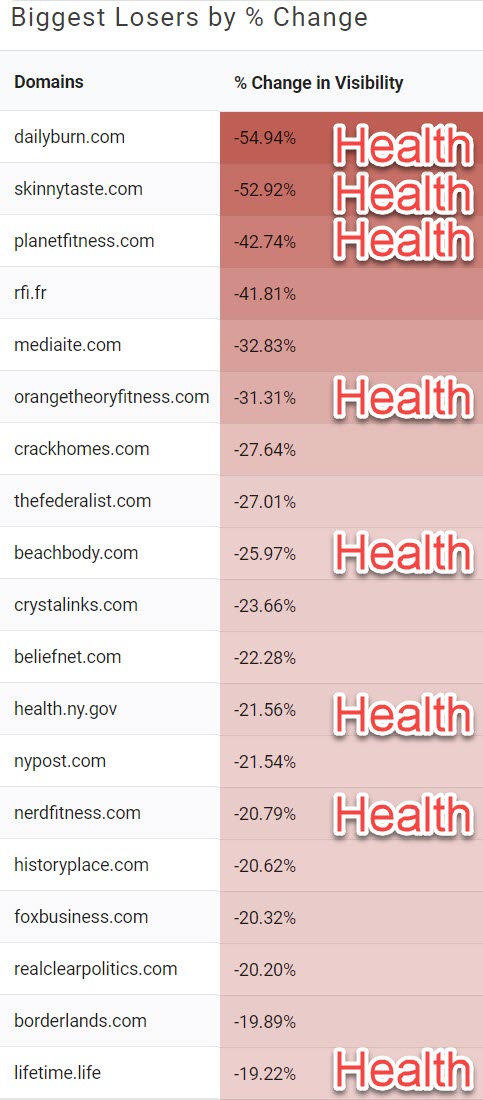

A blog post by Lily Ray from Path Interactive used Sistrix data to show many of the sites which saw high volatility were in the healthcare vertical & other your money, your life (YMYL) categories.

Aggressive Monetization

One of the more interesting pieces of feedback on the update was from Rank Ranger, where they looked at particular pages that jumped or fell hard on the update. They noticed sites that put ads or ad-like content front and center may have seen sharp falls on some of those big money pages which were aggressively monetized:

Seeing this all but cements the notion (in my mind at least) that Google did not want content unrelated to the main purpose of the page to appear above the fold to the exclusion of the page's main content! Now for the second wrinkle in my theory.... A lot of the pages being swapped out for new ones did not use the above-indicated format where a series of "navigation boxes" dominated the page above the fold.

The above shift had a big impact on some sites which are worth serious money. Intuit paid over $7 billion to acquire Credit Karma, but their credit card affiliate pages recently slid hard.

Credit Karma lost 40% traffic from May core update. That’s insane, they do major TV ads and likely pay millions in SEO expenses. Think about that folks. Your site isn’t safe. Google changes what they want radically with every update, while telling us nothing!

The above sort of shift reflects Google getting more granular with their algorithms. Early Panda was all or nothing. Then it started to have different levels of impact throughout different portions of a site.

The brand was sort of a band-aid or a rising tide that lifted all (branded) boats. Now we are seeing Google get more granular with their algorithms where a strong brand might not be enough if they view the monetization as being excessive. That same focus on page layout can have a more adverse impact on small niche websites.

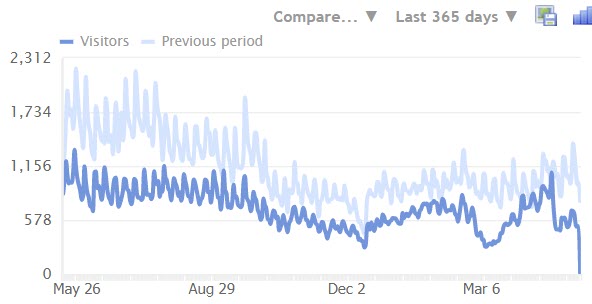

One of my old legacy clients had a site that was primarily monetized by the Amazon affiliate program. About a month ago Amazon chopped affiliate commissions in half & then the aggressive ad placement caused search traffic to the site to get chopped in half when rankings slid on this update.

Their site has been trending down over the past couple of years largely due to neglect as it was always a small side project. They recently improved some of the content about a month or so ago and that ended up leading to a bit of a boost, but then this update came. As long as that ad placement doesn't change the declines are likely to continue.

They just recently removed that ad unit, but that meant another drop in income as until there is another big algo update they're likely to stay at around half search traffic. So now they have a half of a half. Good thing the site did not have any full-time employees or they'd be among the millions of newly unemployed. That experience though really reflects how websites can be almost like debt levered companies in terms of going under virtually overnight. Who can have revenue slide around 88% and then take increased investment in the property using the remaining 12% while they wait for the site to be restored for a quarter year or more?

"If you have been negatively impacted by a core update, you (mostly) cannot see recovery from that until another core update. In addition, you will only see recovery if you significantly improve the site over the long-term. If you haven’t done enough to improve the site overall, you might have to wait several updates to see an increase as you keep improving the site. And since core updates are typically separated by 3-4 months, that means you might need to wait a while."

Almost nobody can afford to do that unless the site is just a side project.

Google could choose to run major updates more frequently, allowing sites to recover more quickly, but they gain economic benefit in defunding SEO investments & adding opportunity cost to aggressive SEO strategies by ensuring ranking declines on major updates last a season or more.

Choosing a Strategy vs Letting Things Come at You

They probably should have lowered their ad density when they did those other upgrades. If they had they likely would have seen rankings at worst flat or likely up as some other competing sites fell. Instead, they are rolling with half of a half on the revenue front. Glenn Gabe preaches the importance of fixing all the problems you can find rather than just fixing one or two things and hoping it is enough. If you have a site that is on the edge you sort of having to consider the trade-offs between various approaches to monetization.

- monetize it lightly and hope the site does well for many years

- monetize it slightly aggressively while using the extra income to further improve the site elsewhere and ensure you have enough to get by any lean months

- aggressively monetize the shortly after a major ranking update if it was previously lightly monetized & then hope to sell it off a month or two later before the next major algorithm update clips it again

Outcomes will depend partly on timing and luck, but consciously choosing a strategy is likely to yield better returns than doing a bit of mix-n-match while having your head buried in the sand.

Reading the Algo Updates

You can spend 50 or 100 hours reading blog posts about the update and learn precisely nothing in the process if you do not know which authors are bullshitting and which authors are writing about the correct signals.

But how do you know who knows what they are talking about?

It is more than a bit tricky as the people who know the most often do not have any economic advantage in writing specifics about the update. If you primarily monetize your own websites, then the ignorance of the broader market is a big part of your competitive advantage.

Making things even trickier, the less you know the more likely Google would be to trust you with sending official messaging through you. If you syndicate their messaging without questioning it, you get a treat - more exclusives. If you question their messaging in a way that undermines their goals, you'd quickly become persona non grata - something cNet learned many years ago when they published Eric Schmidt's address.

It would be unlikely you'd see the following sort of Tweet from saying Blue Hat SEO or Fantomaster or such.

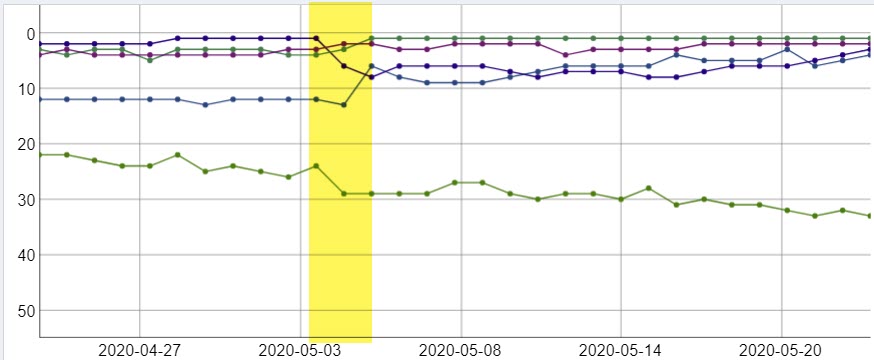

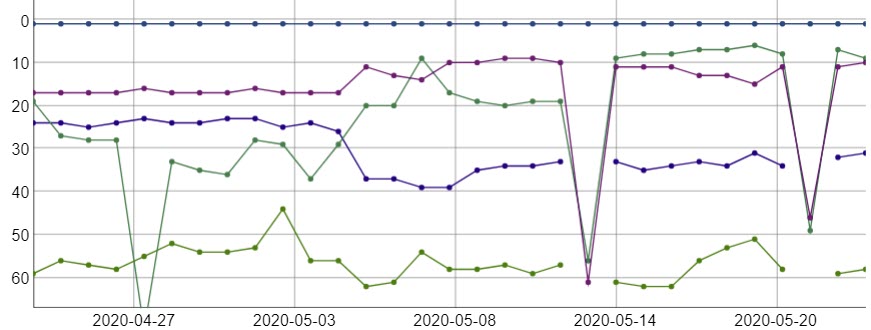

To be able to read the algorithms well you have to have some market sectors and keyword groups you know well. Passively collecting an archive of historical data makes the big changes stand out quickly.

Everyone who depends on SEO to make a living should subscribe to an online rank tracking service or run something like Serposcope locally to track at least a dozen or two dozen keywords. If you track rankings locally it makes sense to use a set of web proxies and run the queries slowly through each so you don't get blocked.

You should track at least a diverse range to get a true sense of the algorithmic changes.

- a couple different industries

- a couple different geographic markets (or at least some local-intent vs national-intent terms within a country)

- some head, mid-tail and longtail keywords

- sites of different size, age & brand awareness within a particular market

Some tools make it easy to quickly add or remove graphing of anything which moved big and is in the top 50 or 100 results, which can help you quickly find outliers. And some tools also make it easy to compare their rankings over time. As updates develop you'll often see multiple sites making big moves at the same time & if you know a lot about the keyword, the market & the sites you can get a good idea of what might have been likely to change to cause those shifts.

Once you see someone mention outliers most people miss that align with what you see in a data set, your level of confidence increases and you can spend more time trying to unravel what signals changed.

I've read influential industry writers mention that links were heavily discounted on this update. I have also read Tweets like this one which could potentially indicate the opposite.

Imagine being @dannysullivan & having to do PR for an update that increased Pinterest’s visibility by 30%.

Pinterest (an image site) now ranks on casino terms & in many other markets where it doesn’t belong.

What an embarrassing update. User intent data must’ve been corrupted.

Check out gettyimages.com . Up even more than Pinterest and ranking for some real freaky shit.

If I had little to no data, I wouldn't be able to get any signal out of that range of opinions. I'd sort of be stuck at "who knows."

By having my own data I track I can quickly figure out which message is more in line with what I saw in my subset of data & form a more solid hypothesis.

No Single Smoking Gun

As Glenn Gabe is fond of saying, sites that tank usually has multiple major issues.

Google rolls out major updates infrequently enough that they can sandwich a couple different aspects into major updates at the same time in order to make it harder to reverse engineer updates. So it does help to read widely with an open mind and imagine what signal shifts could cause the sorts of ranking shifts you are seeing.

Sometimes site-level data is more than enough to figure out what changed, but as the above Credit Karma example showed sometimes you need to get far more granular and look at page-level data to form a solid hypothesis.

As the World Changes, the Web Also Changes

About 15 years ago online dating was seen as a weird niche for recluses who perhaps typically repulsed real people in person. Now there are all sorts of niche specialty dating sites including a variety of DTF type apps. What was once weird & absurd had over time become normal.

The COVID-19 scare is going to cause lasting shifts in consumer behavior that accelerate the movement of commerce online. A decade of change will happen in a year or two across many markets.

Telemedicine will grow quickly. Facebook is adding commerce featured directly onto its platform through partnering with Shopify. Spotify is spending big money to buy exclusives rights to distribute widely followed podcasters like Joe Rogan. Uber recently offered to acquire GrubHub. Google and Apple will continue adding financing features to their mobile devices. Movie theaters have lost much of their appeal.

Tons of offline "value" businesses ended up having no value after months of revenue disappearing while large outstanding debts accumulated interest. There is a belief that some of those brands will have the strong latent brand value that carries over online, but if they were weak even when the offline stores acting like interactive billboards subsidized consumer awareness of their brands then as those stores close the consumer awareness & loyalty from in-person interactions will also dry up. A shell of a company rebuilt around the Toys R' Us brand is unlikely to beat out Amazon's parallel offering or a company that still runs stores offline.

Big box retailers like Target & Walmart are growing their online sales at hundreds of percent year over year.

There will be waves of bankruptcies, dramatic shifts in commercial real estate prices (already reflected in plunging REIT prices), and more people working remotely (shifting residential real estate demand from the urban core back out into suburbs).

People who work remotely are easier to hire and easier to fire. Those who keep leveling up their skills will eventually get rewarded while those who don't will rotate jobs every year or two. The lack of stability will increase demand for education, though much of that incremental demand will be around new technologies and specific sectors - certificates or informal training programs instead of degrees.

More and more activities will become normal online activities.

The University of California has about a half-million students & in the fall semester, they are going to try to have most of those classes happen online. How much usage data does Google gain as thousands of institutions put more and more of their infrastructure and service online?

Colleges have to convince students for the next year that a remote education is worth every bit as much as an in-person one, and then pivot back before students actually start believing it.

It’s like only being able to sell your competitor’s product for a year.

A lot of B & C level schools are going to go under as the like-vs-like comparison gets easier. Back when I ran a membership site here a college paid us to have students gain access to our membership area of the site. As online education gets normalized many unofficial trade-related sites will look more economically attractive on a relative basis.

0 Comments